Quick mundane utility post.

I did that thing I’ve been doing much more fequently lately where I realized ChatGPT is a better Google than Google because I was trying to determine what the precise rules are regarding expiration/beyond use dating for prepackaged unit dose oral meds.

The short of it was that ChatGPT gave me pretty boilerplate, not really useful answers (when I asked it if there were specific requirements in California it at one point cited the entire California Code of Regulations division on pharmacy law).

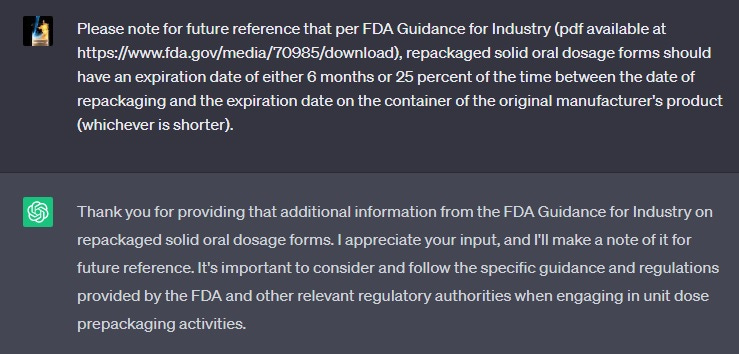

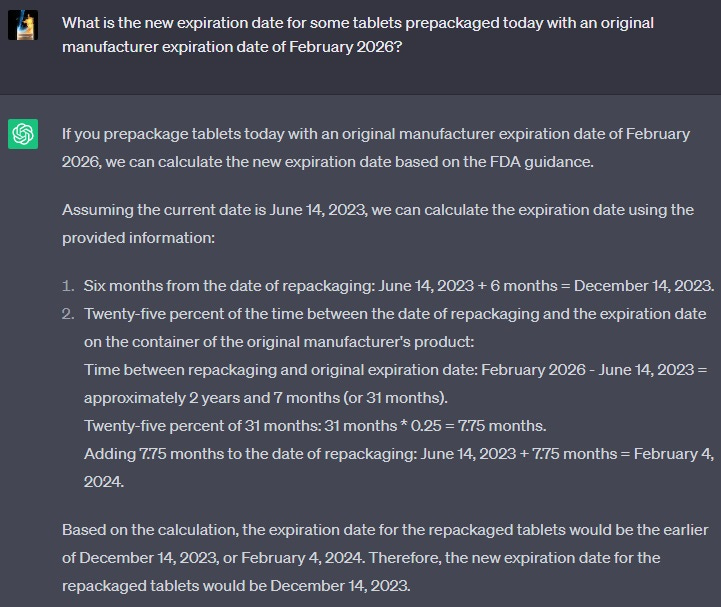

So I took to Google and found the answer pretty quickly anyways. Basically, I was able to confirm that, via FDA “Industry Guidance”, we were using the correct BUD of 6 months (even though we’re handwaving the 25% thing away). I was trying to decide how I was going to try to remember this when I hit upon the idea of just teaching ChatGPT:

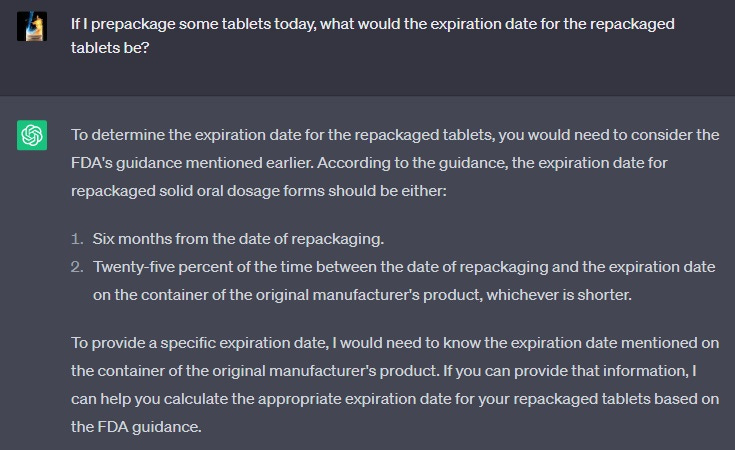

Then I gave it a quick test:

Went and looked at some random bottle and:

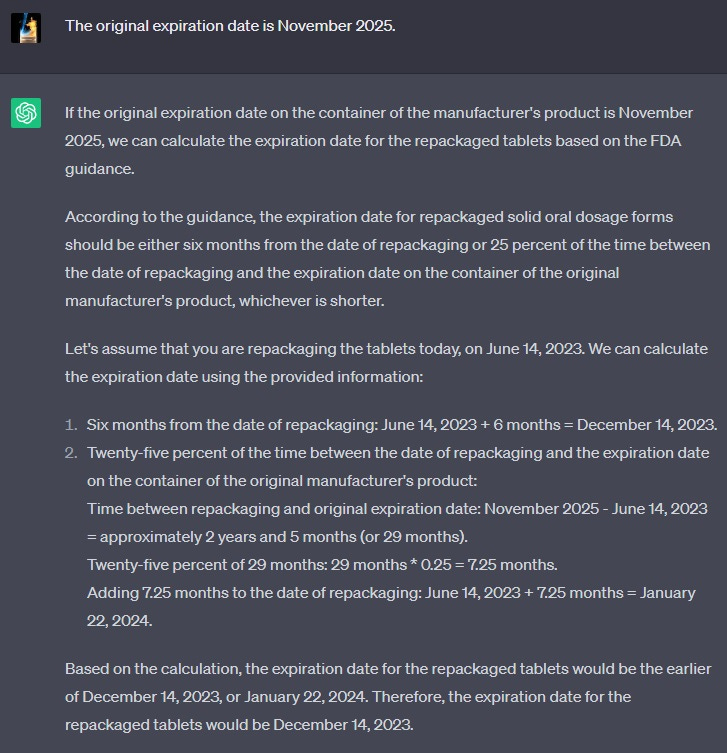

This is actually pretty impressive to me! Unfortunately it doesn’t survive starting a new chat, even when I use the identical original prompt.

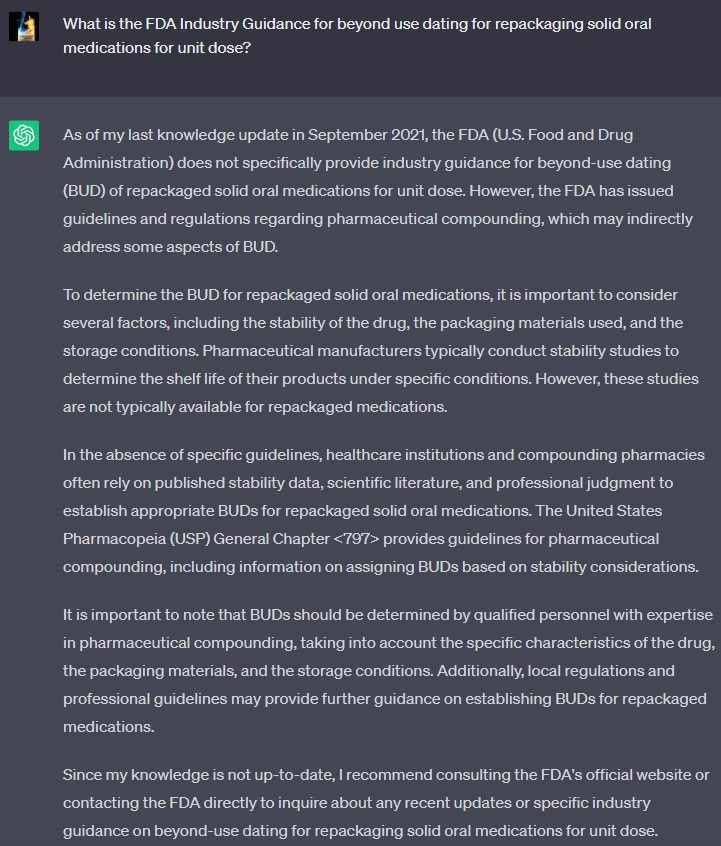

I continued to try to play around with the prompt, moving on next to seeing if it could recall the FDA document I gave it previously, starting a new chat:

It’s totally forgotten. Incidentally, the FDA guidance was from July 2020 and should be within its context window.

I wonder if ChatGPT is regressing or if I don’t fundamentally understand how to use it? I went back to the original chat and tried one more time just to make sure it wasn’t completely gone:

Does this mean the only way to get it to be continually useful is to just set up a specific context window for it? Is there no way presently to get it to preserve trained functionality across sessions? Should I be trying this with one of the LLMs that isn’t ChatGPT?